Young Researcher Paper Award 2025

🥇Winners

🥇Winners

Print: ISSN 0914-4935

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Tweets by Journal_SandM

Sensors and Materials

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

Sensors and Materials, Volume 27, Number 8 (2015)

Copyright(C) MYU K.K.

Copyright(C) MYU K.K.

|

pp. 755-761

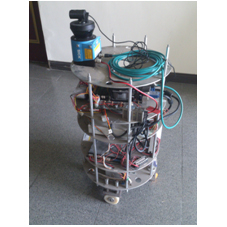

S&M1112 Research Paper of Special Issue https://doi.org/10.18494/SAM.2015.1106 Published: September 7, 2015 Using Laser Range Finder and Multitarget Tracking-Learning-Detection Algorithm for Intelligent Mobile Robot [PDF] Jr-Hung Guo and Kuo-Lan Su (Received July 2, 2014; Accepted February 10, 2015) Keywords: laser ranger finder, multitarget tracking, tracking-learning-detection (TLD), intelligent mobile robot

Self-positioning and obstacle avoidance for an intelligent mobile robot plays an important role in the technologies of the mobile robot. Because of its capabilities of fast response and accurate measurement, the laser range finder (LRF) is a relatively good choice as a sensor for this purpose. However, there are some drawbacks of the LRF. One is that the LRF usually senses in the horizontal plane, and thus the obstacle may not be detected if it is not in the detection plane. Obstacles with high reflectivity will also cause the LRFs to have incorrect detections. Both will lead the mobile robot to a dangerous situation. In this paper, a modified tracking-learning-detection (TLD) image recognition system, which can detect multiple targets simultaneously, is used to assist LRF in positioning and obstacle avoidance.

Corresponding author: Jr-Hung Guo Cite this article Jr-Hung Guo and Kuo-Lan Su, Using Laser Range Finder and Multitarget Tracking-Learning-Detection Algorithm for Intelligent Mobile Robot, Sens. Mater., Vol. 27, No. 8, 2015, p. 755-761. |

Forthcoming Regular Issues

Forthcoming Special Issues

Special Issue on Signal Collection, Processing, and System Integration in Automation Applications 2026

Guest editor, Hsiung-Cheng Lin (National Chin-Yi University of Technology), Ming-Te Chen (National Chin-Yi University of Technology), and Chin-Yi Cheng (National Yunlin University of Science and Technology)

Call for paper

Special Issue on Advanced GeoAI for Smart Cities: Novel Data Modeling with Multi-source Sensor Data

Guest editor, Prof. Changfeng Jing (China University of Geosciences Beijing)

Call for paper

Special Issue on Advanced Sensor Application Development

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Sensing Beyond Transduction: Materials, Devices, and Signal Processing for Intelligent Sensory Systems

Guest editor, Masayuki Sohgawa (Niigata University)

Call for paper

Special Issue on Advanced Materials and Technologies for Sensor and Artificial- Intelligence-of-Things Applications (Selected Papers from ICASI 2026)

Guest editor, Sheng-Joue Young (National Yunlin University of Science and Technology)

Conference website

Call for paper

Special Issue on Biosensing Devices

Guest editor, Kiyotaka Sasagawa (Nara Institute of Science and Technology)

Call for paper

-

For more information of Special Issues (click here)

-

Special Issue on Mobile Computing and Ubiquitous Networking for Smart Society

- Accepted papers (click here)

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

Chengcheng Li, Ming Dong, Hongli Li, Xunwen Yu, Yongxuan Liu, and Chong Zhang - Surface Albedo in Different Land Cover Types in Northeast China

Tao Pan, Fu Li, Yucheng Tao, Lijuan Zhang, and Xiaoyan Jiang

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

- Accepted papers (click here)

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Sheng-Tao Chen and Tai-I Chou - Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Sheng-Tao Chen and Tai-I Chou

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Guest editor, Akira Uchiyama (The University of Osaka) and Jaehoon Paul Jeong (Sungkyunkwan University)

Call for paper

Special Issue on Novel Sensors, Materials, and Related Technologies on Artificial Intelligence of Things Applications

Guest editor, Teen-Hang Meen (National Formosa University), Wenbing Zhao (Cleveland State University), and Cheng-Fu Yang (National University of Kaohsiung)

Call for paper

Special Issue on Low-altitude Economy: Technologies, Infrastructure, and Applications

Guest editor, He Huang and Junxing Yang (Beijing University of Civil Engineering and Architecture)

Call for paper

Special Issue on Multisource Sensors for Geographic Spatiotemporal Analysis and Social Sensing Technology Part 5

Guest editor, Prof. Bogang Yang (Beijing Institute of Surveying and Mapping) and Prof. Xiang Lei Liu (Beijing University of Civil Engineering and Architecture)

Special Issue on Materials, Devices, Circuits, and Analytical Methods for Various Sensors (Selected Papers from ICSEVEN 2026)

Guest editor, Chien-Jung Huang (National University of Kaohsiung), Mu-Chun Wang (Minghsin University of Science and Technology), Shih-Hung Lin (Chung Shan Medical University), Ja-Hao Chen (Feng Chia University)

Conference website

Call for paper

Special Issue on Sensing and Data Analysis Technologies for Living Environment, Health Care, Production Management, and Engineering/Science Education Applications (2026)

Guest editor, Chien-Jung Huang (National University of Kaohsiung), Rey-Chue Hwang (I-Shou University), Ja-Hao Chen (Feng Chia University), Ba-Son Nguyen (University of Economics Ho Chi Minh City)

Call for paper

Special Issue on Advances in Sensors and Computational Intelligence for Industrial Applications

Guest editor, Chih-Hsien Hsia (National Ilan University)

Call for paper

Special Issue on AI-driven Sustainable Sensor Materials, Processes, and Circular Economy Applications

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Intelligent Sensing and AI-driven Optimization for Sustainable Smart Manufacturing

Guest editor, Cheng-Chi Wang (National Sun Yat-sen University)

Call for paper

- Accepted papers (click here)

Copyright(C) MYU K.K. All Rights Reserved.