Young Researcher Paper Award 2025

🥇Winners

🥇Winners

Print: ISSN 0914-4935

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Tweets by Journal_SandM

Sensors and Materials

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

Sensors and Materials, Volume 36, Number 2(3) (2024)

Copyright(C) MYU K.K.

Copyright(C) MYU K.K.

|

pp. 729-743

S&M3557 Research Paper of Special Issue https://doi.org/10.18494/SAM4788 Published: February 29, 2024 A Smart Assembly Line Design Using Human–Robot Collaborations with Operator Gesture Recognition by Decision Fusion of Deep Learning Channels of Three Image Sensing Modalities from RGB-D Devices [PDF] Ing-Jr Ding and Ya-Cheng Juang (Received September 3, 2023; Accepted February 19, 2024) Keywords: assembly line, human–robot collaboration, RGB-D image sensor, operator gesture recognition, deep learning, decision fusion

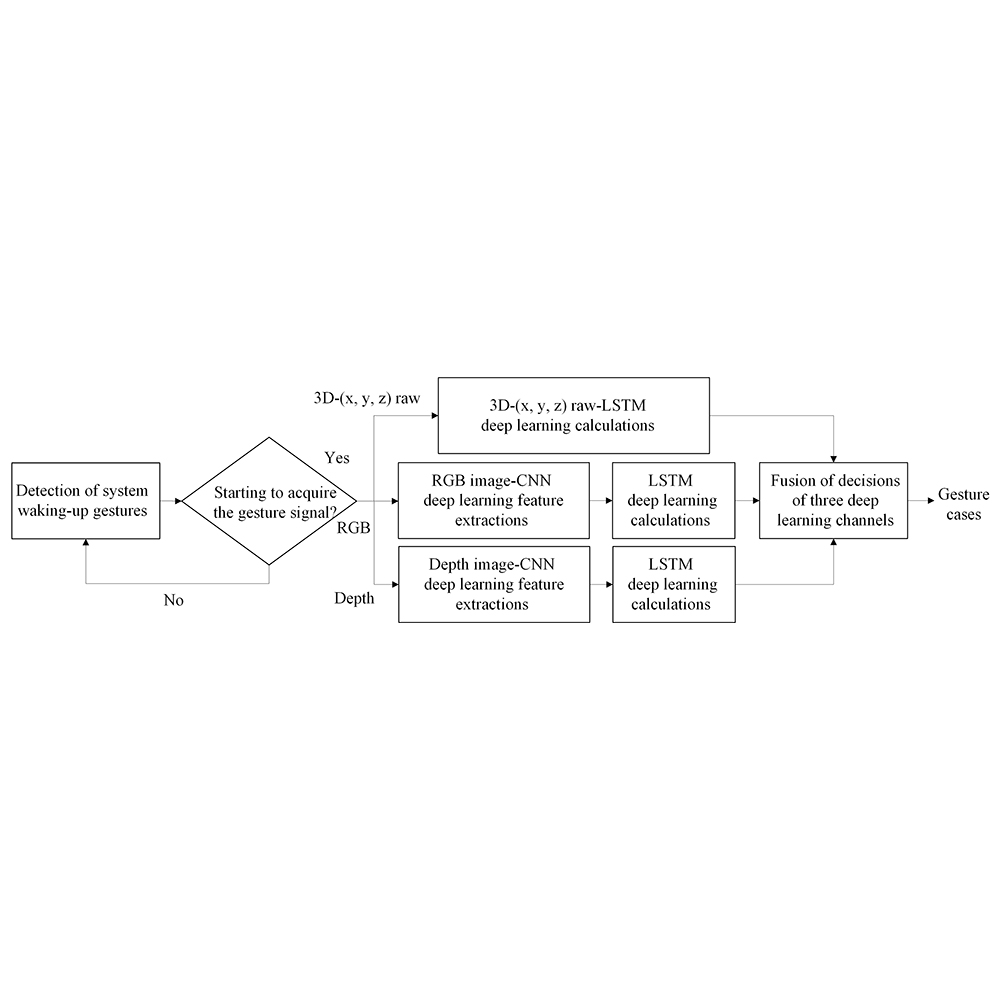

Machine vision with image sensors has been employed in smart manufacturing such as the popular automatic optical inspection (AOI) by deploying an image acquisition camera to optically scan the target device for quality defects. With the rapid progress of image sensor techniques, the RGB-D image sensor device that can capture operator assembly gesture actions to make intelligent interactions between a robot and an operator has been developed. In this study, we propose a smart assembly-line design for intelligent manufacturing or factory applications where a working mode of human–robot collaboration (HRC) will be incorporated. In the proposed HRC assembly line, the operator and manipulator (robotic arm) will co-work with each other where the appropriately deployed RGB-D image device (the well-known Intel RealSense camera in this work) is used to acquire assembly gesture data of the operator to further perform operator gesture recognition. The manipulator will then perform the corresponding feedback action according to the recognized operation gesture (e.g., grabbing the scissors and then moving to the operator if the gesture of winding the tape is recognized). For operator gesture recognition, we first construct three different sensing modalities of deep learning recognition channels, which are the RGB convolution neural network (CNN)-long short-term memory (LSTM) channel with RGB gesture image inputs, the depth CNN-LSTM channel with depth gesture image inputs, and the 3D-(x, y, z) LSTM raw channel with skeleton raw data inputs. A decision fusion scheme is then developed for hybridizations of recognition decision outputs of these three separated deep learning gesture recognition channels with different gesture sensing modalities. In this work, various weight combination strategies to achieve the decision fusion of three deep learning recognition channels are used to evaluate the effectiveness of operator gesture recognition. Experiments on classifications of ten categories of operator assembly gestures show that the half-quarter-quarter strategy with the setting of (wRGB, wDepth, w3D) = (0.5, 0.25, 0.25) for weight allocations of channel decisions can achieve the highest recognition accuracy.

Corresponding author: Ing-Jr Ding  This work is licensed under a Creative Commons Attribution 4.0 International License. Cite this article Ing-Jr Ding and Ya-Cheng Juang, A Smart Assembly Line Design Using Human–Robot Collaborations with Operator Gesture Recognition by Decision Fusion of Deep Learning Channels of Three Image Sensing Modalities from RGB-D Devices, Sens. Mater., Vol. 36, No. 2, 2024, p. 729-743. |

Forthcoming Regular Issues

Forthcoming Special Issues

Special Issue on Signal Collection, Processing, and System Integration in Automation Applications 2026

Guest editor, Hsiung-Cheng Lin (National Chin-Yi University of Technology), Ming-Te Chen (National Chin-Yi University of Technology), and Chin-Yi Cheng (National Yunlin University of Science and Technology)

Call for paper

Special Issue on Advanced GeoAI for Smart Cities: Novel Data Modeling with Multi-source Sensor Data

Guest editor, Prof. Changfeng Jing (China University of Geosciences Beijing)

Call for paper

Special Issue on Advanced Sensor Application Development

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Mobile Computing and Ubiquitous Networking for Smart Society

Guest editor, Akira Uchiyama (The University of Osaka) and Jaehoon Paul Jeong (Sungkyunkwan University)

Call for paper

Special Issue on Advanced Materials and Technologies for Sensor and Artificial- Intelligence-of-Things Applications (Selected Papers from ICASI 2026)

Guest editor, Sheng-Joue Young (National Yunlin University of Science and Technology)

Conference website

Call for paper

Special Issue on Biosensing Devices

Guest editor, Kiyotaka Sasagawa (Nara Institute of Science and Technology)

Call for paper

-

For more information of Special Issues (click here)

-

Special Issue on Innovations in Multimodal Sensing for Intelligent Devices, Systems, and Applications (submission closed)

- Accepted papers (click here)

- Implementation of Deep-Neural-Network–based Unmanned Aerial Vehicle Platform for Fire Smoke Response: Wildfire Smoke Description Experiments

Tae-Hwan Kim, Eun-Su Seo, and Se-Hyu Choi - User-centric Real-time System for Building Disaster Alerts

Tae-Hwan Kim, Eun-Su Seo, and Se-Hyu Choi

- Implementation of Deep-Neural-Network–based Unmanned Aerial Vehicle Platform for Fire Smoke Response: Wildfire Smoke Description Experiments

- Accepted papers (click here)

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

Chengcheng Li, Ming Dong, Hongli Li, Xunwen Yu, Yongxuan Liu, and Chong Zhang - Surface Albedo in Different Land Cover Types in Northeast China

Tao Pan, Fu Li, Yucheng Tao, Lijuan Zhang, and Xiaoyan Jiang

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

- Accepted papers (click here)

- Voltage Reflex and Equalization Charger for Series-connected Batteries

Cheng-Tao Tsai and Jia-Wei Lin

- Voltage Reflex and Equalization Charger for Series-connected Batteries

- Accepted papers (click here)

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Sheng-Tao Chen and Tai-I Chou

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Guest editor, Jiahui Yu (Research scientist, Zhejiang University), Kairu Li (Professor, Shenyang University of Technology), Yinfeng Fang (Professor, Hangzhou Dianzi University), Chin Wei Hong (Professor, Tokyo Metropolitan University), Zhiqiang Zhang (Professor, University of Leeds)

Call for paper

Special Issue on Novel Sensors, Materials, and Related Technologies on Artificial Intelligence of Things Applications

Guest editor, Teen-Hang Meen (National Formosa University), Wenbing Zhao (Cleveland State University), and Cheng-Fu Yang (National University of Kaohsiung)

Call for paper

Special Issue on Low-altitude Economy: Technologies, Infrastructure, and Applications

Guest editor, He Huang and Junxing Yang (Beijing University of Civil Engineering and Architecture)

Call for paper

Special Issue on Multisource Sensors for Geographic Spatiotemporal Analysis and Social Sensing Technology Part 5

Guest editor, Prof. Bogang Yang (Beijing Institute of Surveying and Mapping) and Prof. Xiang Lei Liu (Beijing University of Civil Engineering and Architecture)

Special Issue on Materials, Devices, Circuits, and Analytical Methods for Various Sensors (Selected Papers from ICSEVEN 2025)

Guest editor, Chien-Jung Huang (National University of Kaohsiung), Mu-Chun Wang (Minghsin University of Science and Technology), Shih-Hung Lin (Chung Shan Medical University), Ja-Hao Chen (Feng Chia University)

Conference website

Call for paper

Special Issue on Advances in Sensors and Computational Intelligence for Industrial Applications

Guest editor, Chih-Hsien Hsia (National Ilan University)

Call for paper

Special Issue on AI-driven Sustainable Sensor Materials, Processes, and Circular Economy Applications

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Intelligent Sensing and AI-driven Optimization for Sustainable Smart Manufacturing

Guest editor, Cheng-Chi Wang (National Sun Yat-sen University)

Call for paper

- Accepted papers (click here)

Copyright(C) MYU K.K. All Rights Reserved.