Young Researcher Paper Award 2025

🥇Winners

🥇Winners

Print: ISSN 0914-4935

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Online: ISSN 2435-0869

Sensors and Materials

is an international peer-reviewed open access journal to provide a forum for researchers working in multidisciplinary fields of sensing technology.

Tweets by Journal_SandM

Sensors and Materials

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

is covered by Science Citation Index Expanded (Clarivate Analytics), Scopus (Elsevier), and other databases.

Instructions to authors

English 日本語

Instructions for manuscript preparation

English 日本語

Template

English

Publisher

MYU K.K.

Sensors and Materials

1-23-3-303 Sendagi,

Bunkyo-ku, Tokyo 113-0022, Japan

Tel: 81-3-3827-8549

Fax: 81-3-3827-8547

MYU Research, a scientific publisher, seeks a native English-speaking proofreader with a scientific background. B.Sc. or higher degree is desirable. In-office position; work hours negotiable. Call 03-3827-8549 for further information.

MYU Research

(proofreading and recording)

MYU K.K.

(translation service)

The Art of Writing Scientific Papers

(How to write scientific papers)

(Japanese Only)

Sensors and Materials, Volume 38, Number 2(3) (2026)

Copyright(C) MYU K.K.

Copyright(C) MYU K.K.

|

pp. 935-952

S&M4356 Research paper https://doi.org/10.18494/SAM5789 Published: February 27, 2026 Large Language Model-guided Data Augmentation for You Only Look Once Version 8-based Printed Circuit Board Defect Detection: Novel Human–AI Codesign Approach [PDF] Wei-Hong Lee, Chih-Cheng Chen, and Jin Jiang (Received June 5, 2025; Accepted February 9, 2026) Keywords: printed circuit board (PCB) inspection, You Only Look Once version 8 (YOLOv8), data augmentation, large language model (LLM), image quality, automated optical inspection (AOI)

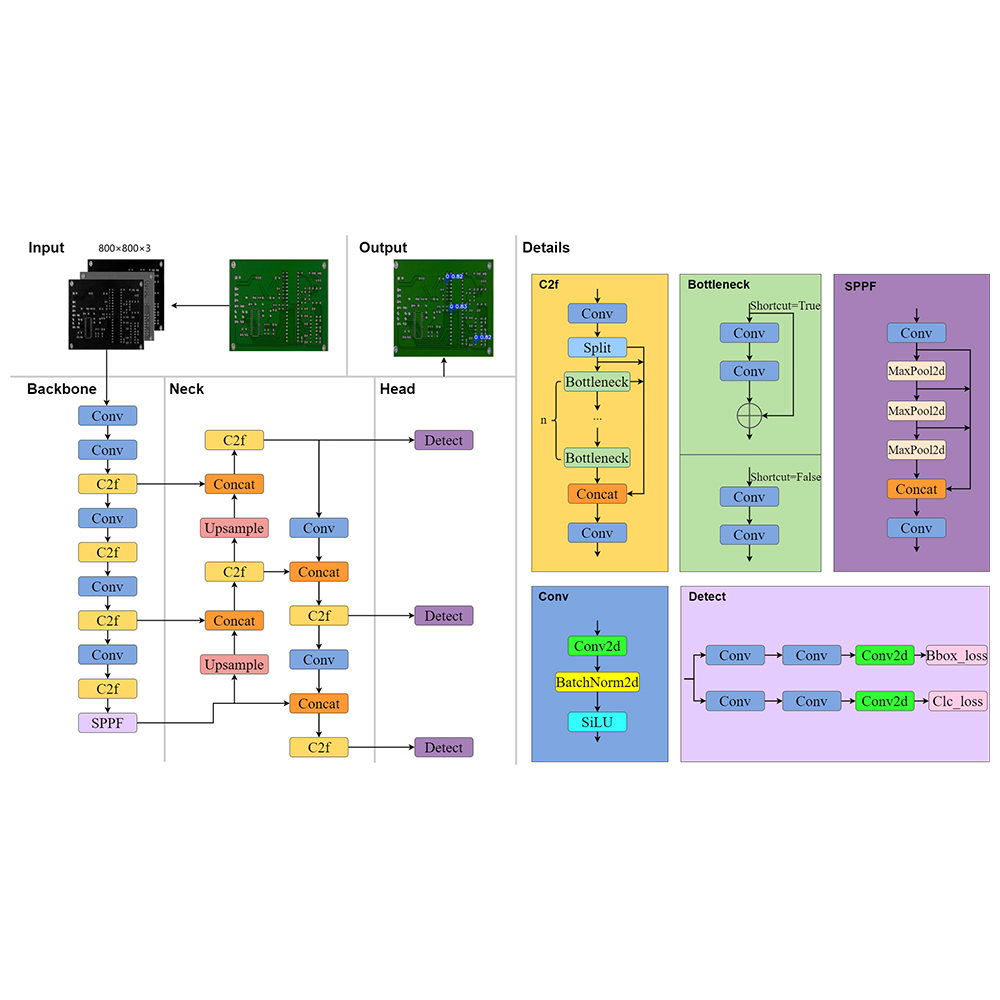

Automated optical inspection (AOI) systems empowered by deep learning are increasingly deployed in smart manufacturing, yet their performance remains vulnerable to real-world imaging distortions such as extreme illumination, blur, and composite artifacts. In this study, we introduce a novel large language model (LLM)-guided data augmentation framework that leverages a human–AI codesign approach to systematically enhance detection robustness in You Only Look Once version 8 (YOLOv8)-based printed circuit board (PCB) defect inspection. Specifically, we employ GPT-4o-mini to analyze class-wise error patterns from a baseline YOLOv8s model trained on a public PCB-defect dataset. While the baseline achieved high accuracy on pristine images [mean average precision (mAP) at an intersection-over-union (IoU) threshold of 0.50, mAP@50 = 97.7%], its performance dropped markedly under five synthetic perturbations, including Gaussian blur (31.6%), motion blur, and extreme brightness. To mitigate these vulnerabilities, we provide the LLM with structured error statistics; in return, it generates a machine-readable augmentation protocol encompassing brightness shifts, exposure modulation, and realistic blur variants, expanding the training dataset by approximately 2.5× without altering the model architecture or hyperparameters. Fine-tuning the model for 25 additional epochs yields substantial improvements across all distortion scenarios, achieving 94.9% mAP@50 on Gaussian blur (+63.3%), 49.4% on composite distortions (+30.1%), and 16.4% on motion blur (+8.6%). Notably, the performance on pristine inputs also improves (99.0% mAP@50), and inference latency remains constant at 19.5 ms per 800 × 800 px frame, confirming zero runtime penalty. These findings validate the efficacy of integrating LLMs as adaptive codesign agents for data-centric vision optimization, offering a scalable and generalizable strategy for resilient AOI in Industry 4.0 environments. Our study contributes to the advancement of sensors and related materials by enhancing the application of optical sensing concepts in machine-learning-driven PCB defect detection, where sensors like high-resolution cameras capture images prone to distortions; the LLM framework improves detection reliability, offering scalable strategies for Industry 4.0 sensing environments.

Corresponding author: Jin Jiang  This work is licensed under a Creative Commons Attribution 4.0 International License. Cite this article Wei-Hong Lee, Chih-Cheng Chen, and Jin Jiang, Large Language Model-guided Data Augmentation for You Only Look Once Version 8-based Printed Circuit Board Defect Detection: Novel Human–AI Codesign Approach, Sens. Mater., Vol. 38, No. 2, 2026, p. 935-952. |

Forthcoming Regular Issues

Forthcoming Special Issues

Special Issue on Signal Collection, Processing, and System Integration in Automation Applications 2026

Guest editor, Hsiung-Cheng Lin (National Chin-Yi University of Technology), Ming-Te Chen (National Chin-Yi University of Technology), and Chin-Yi Cheng (National Yunlin University of Science and Technology)

Call for paper

Special Issue on Advanced GeoAI for Smart Cities: Novel Data Modeling with Multi-source Sensor Data

Guest editor, Prof. Changfeng Jing (China University of Geosciences Beijing)

Call for paper

Special Issue on Advanced Sensor Application Development

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Mobile Computing and Ubiquitous Networking for Smart Society

Guest editor, Akira Uchiyama (The University of Osaka) and Jaehoon Paul Jeong (Sungkyunkwan University)

Call for paper

Special Issue on Advanced Materials and Technologies for Sensor and Artificial- Intelligence-of-Things Applications (Selected Papers from ICASI 2026)

Guest editor, Sheng-Joue Young (National Yunlin University of Science and Technology)

Conference website

Call for paper

Special Issue on Biosensing Devices

Guest editor, Kiyotaka Sasagawa (Nara Institute of Science and Technology)

Call for paper

-

For more information of Special Issues (click here)

-

Special Issue on Innovations in Multimodal Sensing for Intelligent Devices, Systems, and Applications (submission closed)

- Accepted papers (click here)

- Implementation of Deep-Neural-Network–based Unmanned Aerial Vehicle Platform for Fire Smoke Response: Wildfire Smoke Description Experiments

Tae-Hwan Kim, Eun-Su Seo, and Se-Hyu Choi - User-centric Real-time System for Building Disaster Alerts

Tae-Hwan Kim, Eun-Su Seo, and Se-Hyu Choi

- Implementation of Deep-Neural-Network–based Unmanned Aerial Vehicle Platform for Fire Smoke Response: Wildfire Smoke Description Experiments

- Accepted papers (click here)

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

Chengcheng Li, Ming Dong, Hongli Li, Xunwen Yu, Yongxuan Liu, and Chong Zhang - Surface Albedo in Different Land Cover Types in Northeast China

Tao Pan, Fu Li, Yucheng Tao, Lijuan Zhang, and Xiaoyan Jiang

- High-precision Autonomous Driving Map Quality Inspection Indicator System and Evaluation Method

- Accepted papers (click here)

- Voltage Reflex and Equalization Charger for Series-connected Batteries

Cheng-Tao Tsai and Jia-Wei Lin

- Voltage Reflex and Equalization Charger for Series-connected Batteries

- Accepted papers (click here)

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Sheng-Tao Chen and Tai-I Chou

- Design and Development of a Fuzzy-logic-based Long-range Aquaculture System

Guest editor, Jiahui Yu (Research scientist, Zhejiang University), Kairu Li (Professor, Shenyang University of Technology), Yinfeng Fang (Professor, Hangzhou Dianzi University), Chin Wei Hong (Professor, Tokyo Metropolitan University), Zhiqiang Zhang (Professor, University of Leeds)

Call for paper

Special Issue on Novel Sensors, Materials, and Related Technologies on Artificial Intelligence of Things Applications

Guest editor, Teen-Hang Meen (National Formosa University), Wenbing Zhao (Cleveland State University), and Cheng-Fu Yang (National University of Kaohsiung)

Call for paper

Special Issue on Low-altitude Economy: Technologies, Infrastructure, and Applications

Guest editor, He Huang and Junxing Yang (Beijing University of Civil Engineering and Architecture)

Call for paper

Special Issue on Multisource Sensors for Geographic Spatiotemporal Analysis and Social Sensing Technology Part 5

Guest editor, Prof. Bogang Yang (Beijing Institute of Surveying and Mapping) and Prof. Xiang Lei Liu (Beijing University of Civil Engineering and Architecture)

Special Issue on Materials, Devices, Circuits, and Analytical Methods for Various Sensors (Selected Papers from ICSEVEN 2025)

Guest editor, Chien-Jung Huang (National University of Kaohsiung), Mu-Chun Wang (Minghsin University of Science and Technology), Shih-Hung Lin (Chung Shan Medical University), Ja-Hao Chen (Feng Chia University)

Conference website

Call for paper

Special Issue on Advances in Sensors and Computational Intelligence for Industrial Applications

Guest editor, Chih-Hsien Hsia (National Ilan University)

Call for paper

Special Issue on AI-driven Sustainable Sensor Materials, Processes, and Circular Economy Applications

Guest editor, Shih-Chen Shi (National Cheng Kung University) and Tao-Hsing Chen (National Kaohsiung University of Science and Technology)

Call for paper

Special Issue on Intelligent Sensing and AI-driven Optimization for Sustainable Smart Manufacturing

Guest editor, Cheng-Chi Wang (National Sun Yat-sen University)

Call for paper

- Accepted papers (click here)

Copyright(C) MYU K.K. All Rights Reserved.